A little history on my philosophy around high-availability

Around the year 2000, when I was working in network operations for a large wireless telco, a very senior network architect explained to me the company’s philosophy on building high availability solutions into the network. The phrase I remember from that conversation was “we don’t build redundant networks, we build resilient networks..” The difference is that while redundant networks failover to secondary paths to resume traffic, resilient networks don’t go down at all. This concept has stuck with me ever since and I tend to tackle high-availability problems of all kinds with this idea in mind. It’s frankly been very difficult to build solutions that are resilient across the entire stack, mostly because infrastructure technology hasn’t quite gotten there yet.

Things may have changed…

I recently had a meeting with a customer to discuss local high availability for SQL. This customer has a very large multi-node clustered SQL environment (hundreds of TBs of data, hundreds of databases, hundreds of instances, many clusters, many nodes per cluster) and has been testing SQL database mirroring as an alternative to traditional Windows Failover Clustering. The focus of the meeting wound up focused primarily on leveraging VPLEX as an alternative to SQL mirroring, and the reasons for that decision suddenly reminded me of the Resiliency vs Redundancy discussion I had years ago. A VPLEX solution potentially solves the same problem as DB mirroring, does it with less complexity, and less risk.

VPLEX Local as a Resilient HA solution

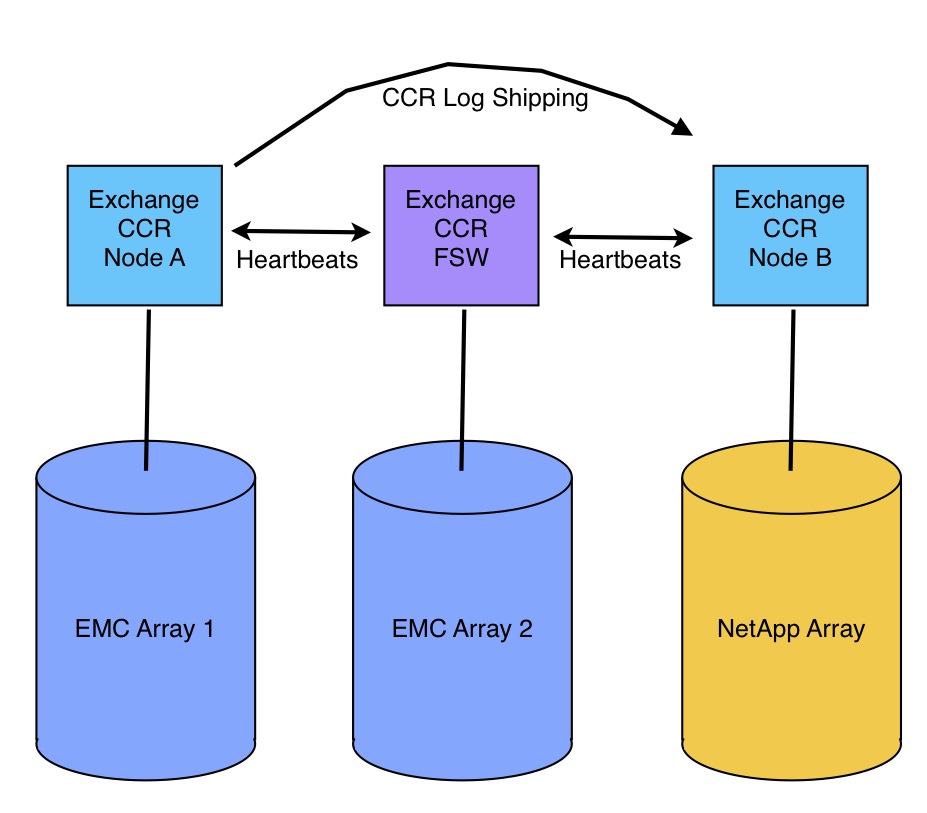

One of the many features of VPLEX is it’s ability to mirror data across multiple storage arrays and present that mirror as a single LUN to the host. For customers already running large multi-node MSCS clusters, the LUN appears just like any normal storage LUN and Windows/SQL treat the LUN normally. There are several reasons VPLEX should be considered as an alternative to database mirroring. (much of this applies to Exchange CCR as well)

VPLEX hardware is inherently Resilient. A VPLEX cluster is an N+1 cluster of loosely coupled nodes, cooperating with each other, but not depending on each other. Hosts can access any of the hosted data, through any of the ports, on any of the cluster nodes. If a node fails for any reason, the remaining nodes continue serving IO for any data. Except for a dead path on the host side (managed by PowerPath or MPIO), there is no failover process, and no cache mirroring to worry about. The potential performance impact of a failure is equal to 1, divided by the quantity of that component in the cluster. (128 x 8gbe ports across 8 director nodes for a large VPLEX Local cluster)

In addition, because VPLEX utilizes a write-through cache, there is never any dirty cache data (data in cache that has not been committed to disk) in a VPLEX system. A power outage or VPLEX hardware failure does not put data at risk.

Other Advantages of using VPLEX over SQL Database Mirroring

Improved Performance:

- Compared with SQL Database mirroring, VPLEX mirroring has significantly less impact on transaction performance for writes and can improve transaction performance in some cases due to the large read cache in the VPLEX directors. (Note: I am comparing to DB Mirroring in Full-Safety mode since the customer’s requirement was a zero-data-loss solution.)

Non-Disruptive Storage Failover:

- In the event of a storage failure, SQL Mirroring must perform a cluster node failover which takes a few seconds at best, possibly disrupting applications. VPLEX provides completely non-disruptive failover when a storage failure occurs. (A server hardware failure still triggers a node failover as it would in any other failover clustering scenario.)

Less Management Overhead:

- From a management perspective, using VPLEX instead of SQL Database mirroring gives the SQL DBAs fewer SQL instances and fewer moving parts to manage on a daily basis. The storage team just presents a mirrored LUN from VPLEX to the cluster and it’s business as usual for the DBAs.

- VPLEX also allows the storage team to non-disruptively migrate data between storage arrays behind VPLEX to balance load, perform hardware refreshes, resolve capacity problems. VPLEX performs the migration at the direction of the storage admins.

Reduced Risk:

- Reducing management complexity also reduces risk. With a high number of database instances and db mirrors involved in a large environment like this one, the chance of one of those mirrors having a problem, or being configured incorrectly, is increased. DBAs can rely on VPLEX mirroring all of the data, 24x7x365, even when host maintenance is being performed.

Reduced Cost:

- When compared with the SQL Database Mirroring solution, the VPLEX solution reduced the number of physical servers needed in this environment, reducing cost enough to more than offset the cost of VPLEX itself. Combined with reductions in soft costs, like reduced DBA management overhead, VPLEX will actually save them quite a bit of money, and increased uptime during storage refresh and maintenance will increase revenues in this case as well.

A Distributed Future:

- Next year, when a second datacenter is online nearby, the first VPLEX Local cluster can be connected to another VPLEX cluster in the new datacenter. Then the SQL cluster nodes and data can be distributed across both datacenters, providing protection from entire datacenter outages, or solving space constraints with no changes to the application or servers, and no downtime.

I wonder how many other customers would like to build more resilient infrastructures?

If you combine a VPLEX solution with a true cluster file system and an active-active database engine (ie: Oracle RAC), you can eliminate the disruption caused by server hardware failures. It’s just a matter of time now until the entire stack can be designed for true resiliency with very little management overhead. I can’t wait to see what happens.

The following EMC White Paper has a lot of good information about using VPLEX in this same context: