One of the customers I work with regularly has a set of key MS SQL databases totaling over 100TB in size which process transactions for their customer facing systems. If these databases are down, no transactions can be processed and customers looking to spend money will go elsewhere. The booking rate related to these databases is ~$90,000 per minute, all day, every day.

Today these databases live on Symmetrix DMX storage which has been very reliable and performant. As happens in an IT world, some of these Symmetrix systems are getting long in the tooth and we are working on refreshing several older DMX systems into fewer, newer VMAX systems. Aside from the efficiency and performance benefits of sub-LUN tiering (EMC FASTVP), and the higher scalability of VMAX vs DMX as well as pretty much any other storage array on the market, EMC has added another new feature to VMAX that is particularly advantageous to this particular customer — Federated Live Migration

Tech Refresh and Data Migrations:

Tech Refresh is a fact of life and data migrations, as a result are common place. The challenge with a data migration is finding a workable process and then shoehorning that process into existing SLAs and maintenance windows. In general, data migrations are disruptive in some way or another, and the level of disruption depends on the technology used and the type of migration. EMC has a long list of migration tools that cover our midrange storage systems, NAS systems, as well as high-end Symmetrix arrays. The downside with these and other vendors’ migration tools is that there is some level of downtime required.

There are 3 basic approaches:

- Highest Amount of Downtime:

- Take system down, copy data, reconfigure system, bring system online

- Less Downtime:

- Copy Data, Take system down, copy recent changes, reconfigure system, bring system online (EMC Open Replicator, EMCopy, RoboCopy, Rsync, EMC SANCopy, etc)

- Even Less Downtime:

- Set up new storage to proxy old storage, reconfigure system, serve data while copying in the background. (EMC Celerra CDMS, EMC Symmetrix Open Replicator)

- (Traditional virtualization systems like Hitachi USP/VSP, IBM SVC, etc are similar to this as well since you must take some level of downtime to get hosts configured through the virtualization layer, after which you can non-disruptively move data).

Common theme in all 3? Downtime!

Non-Disruptive Migrations are becoming more realistic:

A while ago now, EMC added a feature to PowerPath called Migration Enabler which is a way to non-disruptively migrate data between LUNs and/or Storage arrays from the host perspective. PowerPath Migration Enabler could also leverage storage based copy mechanisms and help with cut over. This is very helpful and I have multiple customers who have successfully migrated data with PPME. The challenge has been getting customers to upgrade to newer versions of PowerPath that include the PPME features they need for their specific environment. Software upgrades usually require some level of downtime, at least on a per-host basis, and we run into maintenance windows and SLAs again.

EMC Symmetrix Federated Live Migration (FLM)

For Symmetrix VMAX customers, EMC has added a new capability that could make data migrations easy as pie for many customers. With FLM, a VMAX can migrate data into itself from another storage array, and perform the host cutover, automatically, and non-disruptively. The VMAX does not have to be inserted in front of the other storage array, and there is no downtime required to reconfigure the host before or after the migration.

Federation is the key to non-disruptive migrations:

The way FLM works is really pretty simple. First, the host is connected to the new VMAX storage without removing the connectivity to the old storage. The VMAX is also connected to the old storage array directly (through the fabric in reality). The VMAX system then sets up a replication session for the devices owned by the host and begins copying data. This is all pretty straightforward. The smart stuff happens during the cut over process.

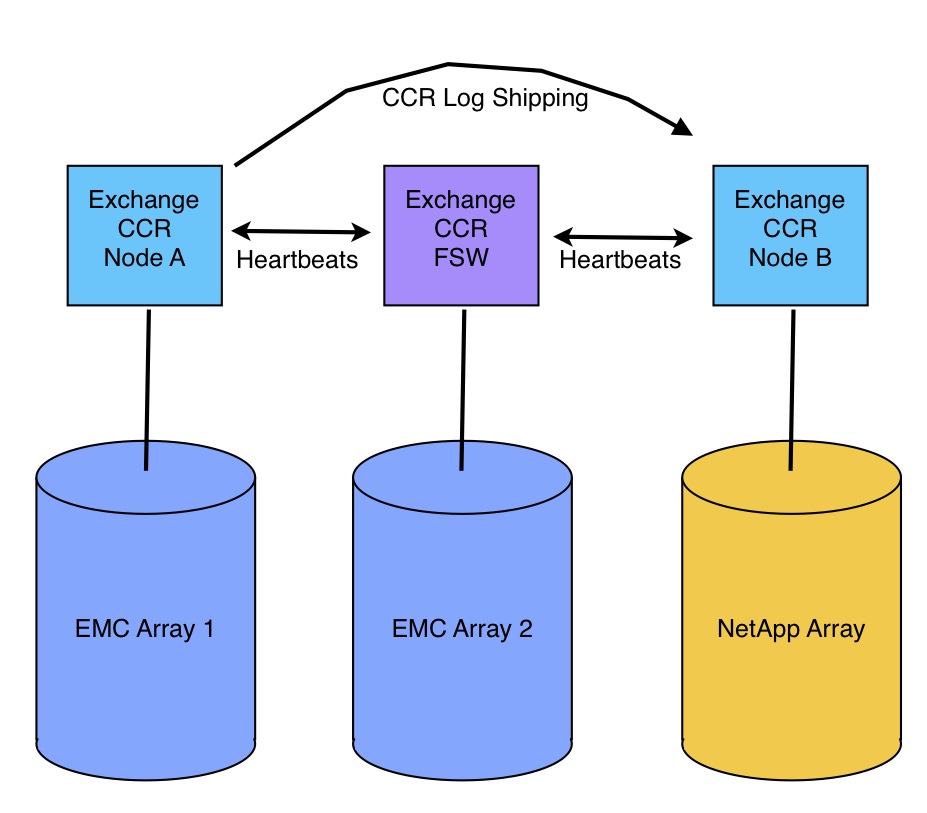

As you probably know, the VMAX is an Active/Active storage system, so all paths from a host to a LUN are active. Clariion, similar to most other midrange systems is an Active/Passive storage system. On Clariion, paths to the storage processor that owns the LUN are active, while paths to the non-owner are passive until needed. PowerPath and many other path management tools support both active/active and active/passive storage systems.

Symmetrix FLM leverages this active/passive support for the cut over. Essentially the old storage system is the “owning SP” before and during the migration. At cut over time, the VMAX essentially becomes the owning SP and it’s paths go active while the old paths go passive. PowerPath follows the “trespass” and the host keeps on chugging. To make this work, VMAX actually spoofs the LUN WWN and host ID, etc from the old array, so the host thinks it’s still talking to the old array. Filesystems and LVMs that signature and track LUNs based on WWN and/or Host ID are unaffected as a result. The cut over process is nothing more than a path change at the HBA level. The data copy and cut over are managed directly from the VMAX by an administrator.

Of course there are limitations… FLM initially supports only EMC Symmetrix DMX arrays as the source and requires PowerPath. From what I gather, this is not a technical limitation however, it’s just what EMC has tested and supports. Other EMC arrays will be supported later and I have no doubt it will support non-EMC arrays as a source as well.

Solving the $5 million problem:

Symmetrix Federated Live Migration became a signature feature in our VMAX discussions with the aforementioned customer because they know that for every minute their database is down, they lose $90,000 in revenue. Even with the fastest of the 3 traditional methods of migration, they would be down for up to an hour while 12 cluster nodes are rezoned to new storage, LUNs masked, drive letters assigned, etc. A reboot alone takes up to 30 minutes. 1 hour of downtime equates to $5,400,000 of lost revenue. By the way, that’s quite a bit more than the cost of the VMAX, and FLM is included at no additional license cost, so FLM just paid for the VMAX, and then some.