A Year Ago Today:

2010 has been a year of significant change for me. This time last year I was on my cell phone in the basement troubleshooting Exchange cluster problems at Nintendo. Since I was one of only two people managing storage, replication, and backups there, I was perpetually on-call and as many of you who manage storage already know, the SAN (similar to the IP network) is the first to be blamed for application issues.

In January, many months of work designing and building a warm disaster recovery site culminated in a successful recovery test, proving the value of data de-duplication and SAN replication vs. tape backups.

A Career Change:

As February wrapped up I said goodbye to Nintendo after nearly 5 years there, and 12 total years working in internal IT, to make a significant change and become a Technology Consultant within EMC’s Telco, Media, and Entertainment division.

Moving from the customer side over to a manufacturer/vendor is a pretty big change. I still have to deal with politics within IT projects, but the politics are different. I still have to worry about financial concerns with IT projects, but those concerns are different. I still work with customers, but they are external customers instead of internal customers. For the customers I work with, I have become a knowledgeable consultant, a friend, and a scapegoat — anything they need me to be at the time.

My first 10 months at EMC have been a whirlwind tour. In the midst of new hire training in Boston, followed by EMC World 2010, also in Boston, I began meeting with customers, attempting to learn about their business and environments. Some customers want to tell you everything they can about their environment; others give up as little information as possible.

Phases of Transition:

I don’t know if this is typical of other people who move from being a customer to working for a vendor, but looking back I see distinct phases that I went through as I adjusted to this new career.

- The Fire Hose Phase – For the first couple months, in addition to the new hire training and technical training, I had to learn how to use all of the internal tools, meet my customers, and try to glean as much information as possible about their IT infrastructures. I took lots of notes and my Livescribe pen proved its worth in short order.

- The Overcompensation Phase – My predecessor was well liked by customers and coworkers, so I set out to try and be as helpful as possible to try and build up a similar relationship with my customers. This backfired in some ways, worked in others, and eventually taught me that I really should just focus on what my customers need and the rest will fall in place.

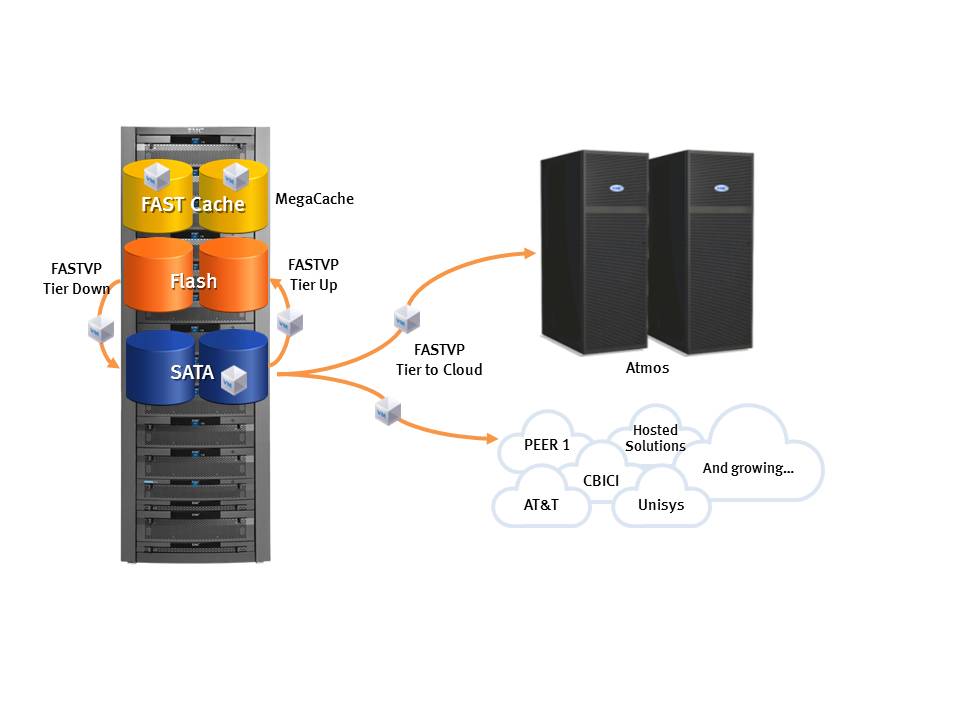

- The Competency Phase – As I finally settled in to the new job and got comfortable I was able to start taking on more complex requests from customers. I had a better understanding of the capabilities of EMC products and how the capabilities really mapped to business problems. At this point I had really figured out my role within EMC as well as with EMC’s customers.

Working for EMC:

Now, as I look back at the past 10 months at EMC, I’m amazed at what I was able to accomplish coming into a sales organization for the first time. EMC has immense amounts of training available; and the people are all extremely helpful and forgiving. One of the things that amazed me is how accessible everyone is for a 45,000-person company. If I need detailed technical information on Symmetrix, I can email an engineer in Hopkinton, MA and within minutes get a very detailed reply, or in many cases a call back. In the past 6 months, I’ve had Product Managers, VP’s, Engineers, and even technical folks from other divisions on the phone, after hours, helping me get information together for my customers.

While I was getting used to my new job, my wife and I had our first child in August and even though I’d only been with EMC for 6 months at the time, my management was so helpful, covering for me longer than they really needed to and ensuring that my workload was reasonable enough to manage as I adjusted to being a new father.

I even achieved EMC Proven Professional certification along the way. EMC has a way of giving you the tools to succeed, and then allowing you to make the decision on how and whether to use them. It’s a competitive environment in a very positive way, where everyone wants everyone else to be successful as opposed to succeeding at another’s peril.

Looking Forward:

As this 2010 year comes to an incredible close for myself, my division, and EMC as a whole, 2011 is shaping up to be great as well. There are some changes coming on January 1st for my division that will affect me a little but I believe they will be positive changes overall. Next year I hope to continue honing my skills as a blogger and in my official role as Technical Consultant. Happy Holidays and New Year to you all.